Stop choosing between speed and accuracy—the 2026 AI stack demands you pick the right “brain” architecture for the specific bottleneck you are facing.

Think of these three concepts as different ways an AI “studies” for a test: RAG is an open-book exam (looking up answers), CAG is cramming (memorizing the book beforehand), and KAG is a detective’s investigation (connecting clues to solve a mystery).

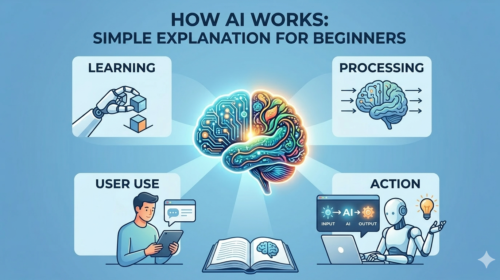

The Simple Explanation

The Problem: AI Forgetfulness

Imagine ChatGPT is a very smart student who graduated in 2023. If you ask about an event that happened today, it doesn’t know the answer because it “left school” years ago. To fix this, developers use three special tricks to give the AI new information.

1. RAG (Retrieval-Augmented Generation)

The “Open-Book Test” Method

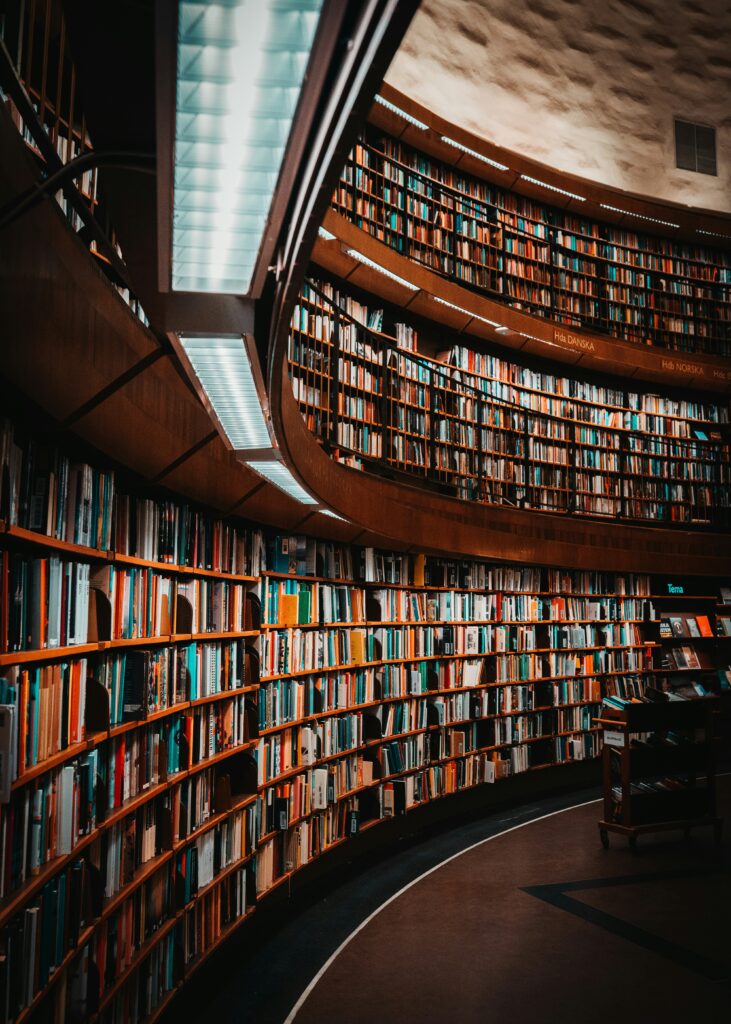

Imagine you are taking a test but you don’t know the answers. However, you are allowed to run to the library, find the right book, and copy the answer onto your test paper.

- How it works: The AI doesn’t know the answer, so it “Googles” your internal documents, finds a specific paragraph, and reads it to you.

- Pro: You can have a library with millions of books (Infinite data).

- Con: It takes time to run to the library and find the right page (Slower).

2. CAG (Cache-Augmented Generation)

The “Photographic Memory” Method

Imagine you have a photographic memory. Before the test, you read the entire textbook. Now, when the teacher asks a question, you don’t need to look it up—the answer is already in your head.

- How it works: You upload the whole document (like a PDF manual) into the AI’s short-term memory at the start. It “remembers” everything instantly.

- Pro: It is lightning fast. No searching required.

- Con: You can’t memorize the whole library—only one or two books at a time (Limited space).

3. KAG (Knowledge-Augmented Generation)

The “Detective Board” Method

Imagine a detective’s wall covered in photos connected by red string. RAG finds a fact, but KAG understands how that fact connects to others.

- How it works: Instead of just reading text, the AI builds a map of relationships (e.g., “Alice” works for “Bob”, “Bob” manages “Project X”). It follows the “red string” to answer complex questions like “How does Alice’s boss affect Project X?”.

- Pro: It solves complex logic puzzles that confuse normal AI.

- Con: It is very hard to set up (You have to build the “detective board” first).

Stop choosing between speed and accuracy—the 2026 AI stack demands you pick the right “brain” architecture for the specific bottleneck you are facing.

The Core Distinction:

RAG (Retrieval-Augmented Generation) is a Search Engine: Best for massive, dynamic datasets where you fetch only what you need.

CAG (Cache-Augmented Generation) is RAM: Best for small, static, high-frequency contexts where you preload everything for near-zero latency.

KAG (Knowledge-Augmented Generation) is an Expert System: Best for complex reasoning where “connecting the dots” via Knowledge Graphs matters more than raw retrieval.

The Developer’s Decision Matrix

Architecture Comparison

| Feature | RAG (Retrieval) | CAG (Cache) | KAG (Knowledge) |

|---|---|---|---|

| Analogy | Google Search | RAM / Memory | Mind Map |

| Mechanism | Vector Search (Chunks) | Preloaded KV Cache | Graph + Logic |

| Latency | High (Retrieval Step) | Lowest (Instant) | Variable (Reasoning) |

| Data Scale | Unlimited (Billions) | Limited (Context Window) | Structured Domains |

| Best For | Enterprise Search | Manuals & Contracts | Medical/Legal Logic |

1. RAG: The Dynamic Librarian

When “Good Enough” Search Scale Matters

Retrieval-Augmented Generation remains the industry standard for querying massive, shifting datasets (e.g., Wikipedia, daily news, enterprise logs). It relies on a “Just-in-Time” delivery model: finding relevant chunks via vector similarity and feeding them to the LLM.

The Bottleneck:

RAG introduces latency because every query triggers a database lookup. It also suffers from “context fragmentation”—if the chunker cuts a paragraph in half, the answer quality degrades.

Implementation Pattern (Python/LangChain Concept):

# RAG: Retrieve -> Augment -> Generate

vector_store = Pinecone.from_documents(docs, embeddings)

retriever = vector_store.as_retriever()

# The retrieval step adds latency (approx 500ms - 2s)

relevant_docs = retriever.invoke("What is the Q3 revenue?")

response = llm.invoke(f"Context: {relevant_docs} Question: ...")

RAG Architecture: The model relies on an external Vector Database to fetch “chunks” of text, which are then pasted into the context window.

2. CAG: The Speed Demon

Eliminating the Retrieval Step

Cache-Augmented Generation (CAG) is gaining traction in 2026 for “static context” problems. Instead of searching for data, you preload the entire dataset into the LLM’s long-context window (or KV Cache) once. Subsequent queries hit the cached state directly, eliminating the retrieval round-trip.

The “A-Ha” Moment:

If your manual is 500 pages, it fits entirely inside Gemini 1.5 or GPT-4o’s context window. Why retrieve chunks? Just feed the whole book in and cache the state.

Best For:

- Product Manual Chatbots: The manual never changes.

- Legal Document Analysis: You are analyzing one specific case file.

- Financial Reports: Analyzing a single 10-K iteratively.

Implementation Pattern:

# CAG: Preload -> Cache -> Chat

# 1. Upload file to cache storage (e.g., Gemini Context Caching)

cache = caching.CachedContent.create(

model='models/gemini-1.5-flash-001',

contents=[document_file],

ttl=datetime.timedelta(minutes=10)

)

# 2. Query directly (Zero Retrieval Latency)

# The model "remembers" the document instantly.

model = GenerativeModel.from_cached_content(cached_content=cache)

response = model.generate_content("Summarize the liability clause.")

3. KAG: The Logic Engine

Solving the “Reasoning Gap”

Knowledge-Augmented Generation (often implemented via GraphRAG) addresses the biggest flaw in vector search: relationships. Standard RAG treats data as flat text. KAG maps entities (People, Places, Rules) into a Knowledge Graph, allowing the AI to “hop” between connected facts.

The Problem Solved:

If you ask RAG, “How does the CEO’s new policy impact the subsidiary in France?”, it might find the policy and the subsidiary but miss the connection. KAG traverses the graph: Policy -> affects -> Region -> contains -> Subsidiary.

Knowledge Graph Structure: Instead of vectors, KAG uses nodes and edges. This allows the LLM to perform “multi-hop reasoning” to answer complex questions that require traversing a logical path.

Graph Construction: Extracts entities and relationships from text to build a structured web of logic.

Reasoning: The LLM queries the graph (Cypher/SPARQL) to verify facts before generating an answer.

Common Architecture FAQs

1. Is RAG dead in 2026?

No, but “Naive RAG” is. The era of simply chunking text into a vector database and retrieving the top-3 matches is over. However, RAG remains the only viable solution for infinite-scale datasets (like the entire internet or terabytes of corporate history). You cannot fit 5TB of data into a CAG context window. RAG has evolved into “Agentic RAG,” where the system actively plans searches rather than just fetching keywords.

2. Which architecture is cheaper to run?

It depends on your Query Frequency:

- Use RAG if you ask a question once. You pay only for the retrieval and the small answer generation.

- Use CAG if you ask many questions about the same document. You pay a high “upfront” cost to load the document into the cache (pre-computation), but subsequent questions are dirt cheap because you skip the processing step.

- The Rule: If you query a document more than ~5 times, CAG becomes cheaper than reprocessing it via RAG.

3. Can I combine them? (The “RAG-to-CAG” Pattern)

Yes, this is the dominant design pattern for 2026.

Instead of choosing one, developers are using RAG to search their massive database for the top 50 relevant documents and then dynamically loading those 50 documents into a CAG (Cached) environment for the user to chat with. This gives you the “Infinity” of RAG with the “IQ and Speed” of CAG during the actual conversation.

4. What is “Context Engineering”?

Context Engineering is the new Prompt Engineering. In a CAG-first world, you are no longer just writing instructions; you are architecting the model’s memory. This discipline requires rigorously structuring data—converting raw PDFs into clean Markdown, standardizing tables, and enforcing header hierarchy—before it ever hits the cache. Think of it as Technical SEO for LLMs: if the model cannot parse your document’s structure, it cannot reason over it effectively.

5. Why not just use a 10 Million Token Context Window?

Latency and Cost.

While models like Gemini 1.5 Pro can technically “read” 10 million tokens (thousands of books), feeding that much data into the model for every single question is agonizingly slow (latency) and prohibitively expensive. CAG solves this by “freezing” that state so you don’t have to re-read the books for every new question, but the initial load time still exists.

The Bottom Line: Do not delete your Vector Database. The winning strategy for 2026 is hybrid: Use RAG to find the relevant data, and CAG to reason deeply across it.