Learn how Retrieval Augmented Generation (RAG) improves AI accuracy by combining large language models with external knowledge sources. Explore multimodal RAG and knowledge graph approaches.

Retrieval Augmented Generation (RAG): Improving AI Accuracy with External Knowledge

Large Language Models (LLMs) like GPT, Claude, and others are powerful, but they have a major limitation: they rely mainly on the data they were trained on. This means they can sometimes produce outdated information or hallucinated answers.To solve this problem, the AI community introduced Retrieval Augmented Generation (RAG).

RAG is an architecture that improves AI responses by retrieving relevant information from external data sources before generating the final answer. Instead of relying only on the model’s internal knowledge, the system combines information retrieval with text generation.

This approach significantly improves the accuracy, reliability, and contextual relevance of AI-generated responses.

According to Databricks, around 60% of enterprise LLM applications use Retrieval Augmented Generation, while 30% rely on multi-step reasoning chains. Studies also show that RAG-based responses can be up to 43% more accurate than responses generated using only fine-tuned language models.

What is Retrieval Augmented Generation (RAG)?

Retrieval Augmented Generation is a framework that combines two main components:

- Information Retrieval

- Language Generation

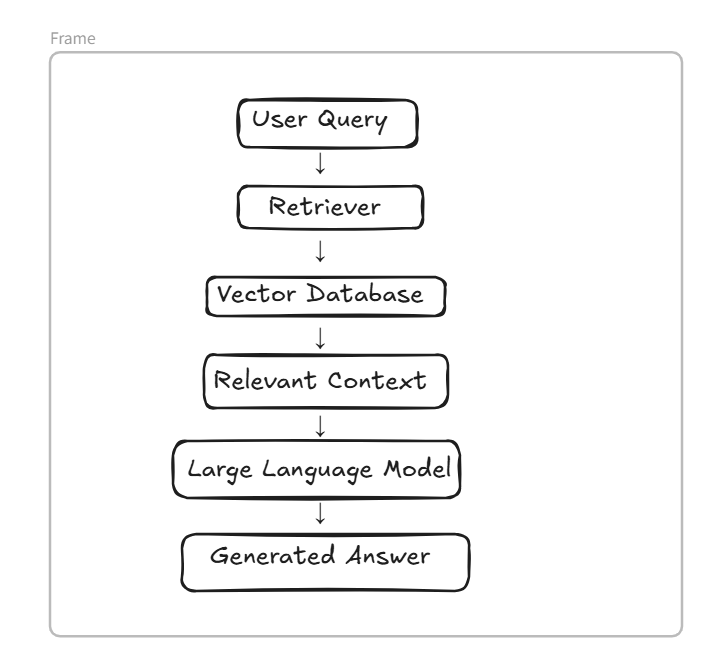

The process works in the following steps:

- A user submits a query.

- The system searches external knowledge sources such as documents, databases, or vector stores.

- Relevant information is retrieved.

- The retrieved content is passed to the language model.

- The language model generates a final response using that context.

In simple terms, RAG allows AI systems to look up information before answering, similar to how humans search for references before giving a response.

This process helps reduce hallucinations and ensures that answers are based on real data.

Why RAG is Important for Large Language Models

Traditional LLMs depend entirely on training data. Once trained, updating their knowledge requires expensive retraining or fine-tuning.

RAG solves this problem by allowing models to access external and continuously updated information sources.

Some key advantages include:

Improved Accuracy

By retrieving real documents and data, RAG provides more reliable answers compared to models that rely only on pre-trained knowledge.

Up-to-Date Information

External databases can be updated regularly, allowing AI systems to provide current information without retraining the model.

Reduced Hallucinations

Since responses are grounded in retrieved documents, the likelihood of generating incorrect information decreases.

Enterprise Scalability

Organizations can integrate internal knowledge bases, documentation, and company data into the RAG pipeline.

Because of these advantages, RAG has become one of the most widely used architectures for enterprise AI applications.

Limitations of Traditional RAG Systems

Although RAG improves AI responses, traditional implementations still face several challenges.

Complex Query Handling

Basic RAG systems may struggle with multi-step reasoning or queries that require connecting information from multiple sources.

Context Understanding

Sometimes the retriever fetches documents that are relevant in keywords but not in deeper context.

Data Diversity

Traditional RAG mainly works with text data and may not handle images, audio, or video effectively.

These limitations have encouraged researchers and engineers to develop advanced RAG architectures.

Advancements: Multimodal RAG and Knowledge Graphs

Recent developments in AI have introduced new techniques that enhance the capabilities of RAG systems. Two major advancements are Multimodal RAG and Knowledge Graph-based RAG.

Multimodal RAG

Traditional RAG systems process only textual data. However, real-world information exists in many formats.

Multimodal RAG extends the system to work with multiple data types, including:

- Text

- Images

- Audio

- Video

For example, a multimodal RAG system could analyze an image, retrieve related information from a database, and then generate an explanation.

This makes AI systems more powerful and context-aware, especially in fields like healthcare, education, and multimedia analysis.

Knowledge Graph-Based RAG

Another significant improvement is the integration of knowledge graphs.

A knowledge graph represents information as interconnected entities and relationships. Instead of storing information as isolated documents, it organizes data in a structured network.

This structure helps the system understand how different concepts relate to each other.

For example:

Tesla → founded by → Elon Musk

Elon Musk → CEO of → Tesla

Tesla → produces → Electric VehiclesUsing this structured representation, the AI system can retrieve information more intelligently.

Microsoft research has shown that GraphRAG approaches can reduce token usage by 26% to 97%, making the system more efficient while improving the quality of generated responses.

Performance Improvements with Advanced RAG

Recent developments in RAG architectures have produced strong results in both research benchmarks and real-world applications.

For example:

- Knowledge graph-based RAG achieved 86.31% accuracy on the RobustQA benchmark, outperforming many traditional RAG methods.

- A follow-up study by Sequeda and Allemang found that integrating an ontology into the system reduced the overall error rate by 20%.

These improvements demonstrate how structured knowledge and advanced retrieval techniques can greatly enhance AI system performance.

Real-World Applications of RAG

RAG is already being used across various industries to build intelligent AI systems.

Enterprise Knowledge Assistants

Companies use RAG to allow employees to search internal documentation and receive accurate AI-generated answers.

Customer Support Automation

RAG systems can retrieve relevant help articles and generate precise responses to customer queries.

Research and Data Analysis

Researchers can query large document collections and obtain summarized insights.

Developer Tools

RAG is used in coding assistants to retrieve documentation and generate context-aware programming help.

LinkedIn reported that combining RAG with knowledge graphs reduced customer support resolution time by 28.6%, demonstrating its practical value in large-scale systems.

Future of Retrieval Augmented Generation

The future of RAG is closely tied to advancements in AI infrastructure and data integration.

Several trends are expected to shape the next generation of RAG systems:

- Improved retriever models

- Hybrid search (vector + keyword search)

- Better multimodal capabilities

- Integration with structured knowledge bases

- More efficient token usage

As these technologies evolve, RAG will play a critical role in building trustworthy and scalable AI systems.

Conclusion

Retrieval Augmented Generation has become a key technique for improving the accuracy and reliability of large language models.

By combining external knowledge retrieval with language generation, RAG enables AI systems to produce responses that are more accurate, up-to-date, and contextually relevant.

With advancements such as multimodal RAG and knowledge graph integration, the framework continues to evolve and address the limitations of traditional AI systems.

As more organizations adopt AI-driven applications, RAG will remain an essential architecture for building intelligent and reliable AI solutions.

FAQ

Retrieval Augmented Generation is an AI architecture that improves LLM responses by retrieving relevant information from external knowledge sources before generating an answer.

Retrieval Augmented Generation solves the problem of outdated or incorrect responses generated by large language models. By retrieving relevant information from external knowledge sources, RAG ensures that AI responses are more accurate and context-aware.

RAG works by combining information retrieval with language generation. When a user submits a query, the system first retrieves relevant documents from a knowledge base or vector database. The retrieved information is then passed to a language model to generate a more accurate response.

Fine-tuning updates the model by retraining it with new data, which can be expensive and time-consuming. RAG, on the other hand, retrieves external information at runtime, allowing the model to access updated knowledge without retraining.

A vector database stores numerical representations (embeddings) of text data. These embeddings allow the system to perform semantic search and retrieve relevant documents based on meaning rather than exact keywords.

Many researchers believe RAG will remain a core architecture for AI applications because it combines the strengths of language models with real-time information retrieval.